AI interview tools are now analyzing facial expressions to evaluate candidates during job interviews. These tools track micro-expressions, eye contact, and facial movements to assess emotions like stress, confidence, and engagement. Here's what you need to know:

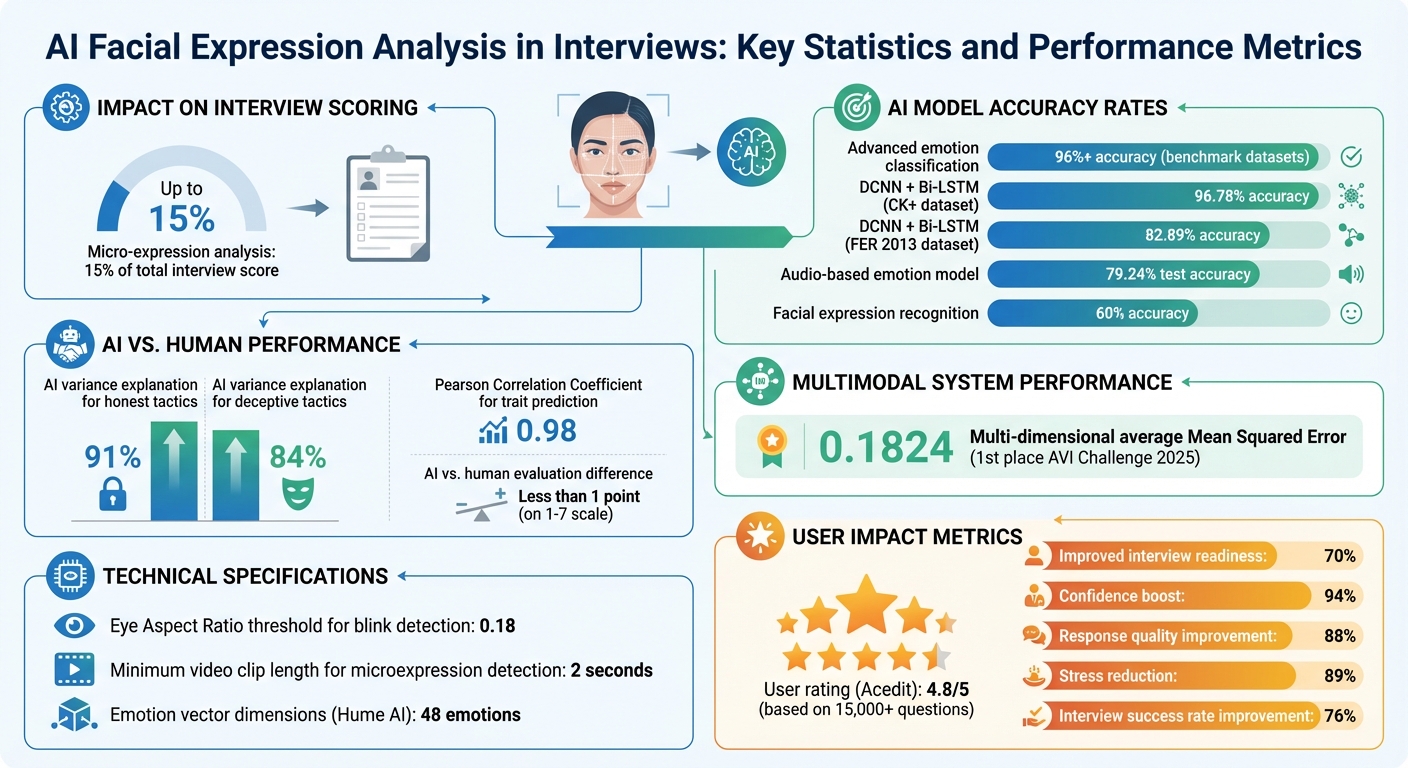

- Why It Matters: Nonverbal cues significantly impact interview outcomes. AI systems allocate up to 15% of an interview score to micro-expression analysis.

- How It Works: Using technologies like DeepFace, CNNs, and Bi-LSTM networks, AI maps facial features in real time to classify emotions such as happiness, neutrality, or stress.

- Key Features: Real-time processing tracks eye contact, blinks, and head movements, offering instant feedback during live or recorded interviews.

- Performance: Advanced models achieve high accuracy, with some reaching over 96% in emotion classification on benchmark datasets.

- Practical Use: Tools like Acedit provide coaching by analyzing facial expressions and offering feedback to improve engagement and confidence.

While these tools help candidates refine their nonverbal communication and interview preparation, they also raise concerns about potential biases and privacy issues. Always review a company's policy on biometric data before participating in AI-based interviews.

AI Facial Expression Analysis in Interviews: Key Statistics and Performance Metrics

Technologies Behind Facial Expression Analysis

AI Methods for Expression Analysis

AI systems designed to analyze facial expressions during interviews rely on intricate deep learning frameworks. These systems often combine Deep Convolutional Neural Networks (DCNN) to extract spatial features from video frames with Bi-Long Short Term Memory (Bi-LSTM) networks, which track emotional changes over time.

To enhance precision, dual attention mechanisms are employed. These mechanisms prioritize key facial features - like the eyes and mouth - while filtering out irrelevant background details. A model combining DCNN, Bi-LSTM, and dual attention achieved impressive accuracy rates: 82.89% on the FER 2013 dataset and 96.78% on the CK+ dataset. These results highlight the system’s ability to classify emotions effectively.

The importance of facial data in understanding emotions is well-documented:

"Facial expressions hold large amount of rich and effective information as it conveys what really goes through their hearts. At times it is more accurate than other expressions such as language and voice tone."

- Scientific Reports

By integrating multiple tools, these systems create a comprehensive emotional profile. For instance, MediaPipe detects body landmarks, modified Haar Cascades handle smile detection, and Hume AI analyzes a 48-emotion vector. These methods, combined with verbal data processed by CrisperWhisper and Parselmouth, achieve near-human accuracy. In fact, feedback generated by Google Gemini showed less than a 1-point difference on a 1–7 scale when compared to human evaluations. Such advanced models enable real-time feedback, significantly enhancing the interview process.

Real-Time Processing During Interviews

Real-time analysis during live interviews demands swift and efficient processing. AI systems tackle this by using multi-threading, which allows intensive tasks - like head detection using YOLOv5 - to run in the background without disrupting the video feed.

For example, the Eye Aspect Ratio (EAR) is calculated in real time to monitor blinks, with a threshold of 0.18 identifying closed eyes. Additionally, advanced gaze estimation models produce heatmaps that reveal where and for how long a candidate focuses their attention. These tools ensure smooth, dynamic feedback without compromising performance.

sbb-itb-20a3bee

Research on Facial Expression Analysis

AI vs. Human Emotion Recognition

Recent studies suggest that AI can recognize honesty and deception in candidates more effectively than human evaluators. In 2024, researchers from National Taiwan Normal University - Hung Yue Suen, Kuo En Hung, Chewei Liu, Yu Sheng Su, and Han Chih Fan - shared their findings in the IEEE Transactions on Computational Social Systems. Their research involved analyzing 121 job applicants during 12- to 15-minute video interviews with AI using advanced deep learning models combining 3D-CNN, FaceMesh, and LSTM architectures.

The AI models accounted for 91% of the variance in honest impression management (IM) tactics and 84% of the variance in deceptive tactics. This performance significantly outpaced 30 human interviewers assessing the same recordings. The researchers highlighted:

"Our models explained 91% and 84% of the variance in honest and deceptive IMs, respectively, and showed a stronger correlation with self-reported IM scores compared to human interviewers."

The AI achieved these results by identifying temporal patterns in facial expressions and head movements - subtle cues that humans often overlook. This emphasizes the importance of integrating multiple behavioral signals, a challenge multimodal approaches are designed to address.

Multimodal Approaches to Behavioral Analysis

Incorporating facial expressions, voice tone, and body language creates a fuller understanding of candidate behavior. For example, during the AVI Challenge 2025, a team from Hefei University of Technology, led by Jia Li and Yang Wang, developed a multimodal framework to evaluate five dimensions: integrity, cooperation, social versatility, development orientation, and overall employability.

Their system used SigLIP2 for visual data, Emotion2Vec for audio features, and SFR-Mistral-Embedding for text analysis. By processing six candidate responses through a "Shared Compression Multilayer Perceptron" (MSCMLP) , which can be optimized using an AI interview answer generator,, the framework achieved a multi-dimensional average Mean Squared Error of 0.1824, earning first place in the competition. The audio-based emotion model achieved 79.24% test accuracy, while facial expression recognition reached 60%.

This multimodal approach captures both explicit and subtle behavioral cues. Research also demonstrated that such systems achieved a Pearson Correlation Coefficient of 0.98 when predicting traits like "Excited-Friendly".

FACS-CNN-LSTM Models in Practice

Advanced hybrid models now integrate Facial Action Coding System (FACS) data to detect micro muscle movements known as Action Units (AUs). This level of detail goes beyond categorizing emotions like "happy" or "nervous", offering a more precise look at behavior. By combining Convolutional Neural Networks (CNNs) for spatial analysis with Long Short-Term Memory (LSTM) networks for temporal tracking, these systems can identify microexpressions in video clips as short as two seconds.

When facial dynamics are paired with speech features and head-motion units (kinemes), AI systems outperform traditional methods of assessment. Research published in the Journal of Real-Time Image Processing found that these models "can provide better predictive power than can human-structured interviews, personality inventories, occupation interest testing, and assessment centers".

Additional precision comes from analyzing blink patterns and gaze direction to evaluate anxiety and attention levels. Attention-based fusion mechanisms further enhance the AI's ability to determine which cues - facial, vocal, or motion - are most relevant for specific traits. This makes the AI's assessments more transparent and easier to interpret.

How AI Interview Tools Use Facial Expression Analysis

Real-Time Emotion Detection for Engagement and Confidence

AI interview tools analyze facial expressions and eye contact to provide instant feedback on engagement and confidence. Using machine learning libraries like Google's ML Kit, these tools process video data during practice interviews to pinpoint moments when non-verbal cues don't align with spoken words.

In July 2025, researchers at Dr. Vishwanath Karad MIT World Peace University introduced an AI-powered Android application that combined facial analysis with conversational AI. Led by Sanika Rangnath Jagtap and Vedant Kulkarni, the study revealed that 70% of participants improved their interview readiness and communication skills after multiple sessions with real-time feedback. The tool focused on eye contact consistency and subtle facial movements, helping users refine their non-verbal communication. Many platforms also integrate voice recognition with facial analysis to ensure that facial expressions match verbal responses, promoting authenticity. According to user reports, these AI-driven coaching tools have led to a 94% boost in confidence and an 88% improvement in response quality.

By leveraging these detection capabilities, AI interview tools provide real-time coaching to help candidates fine-tune their non-verbal communication.

AI-Powered Interview Simulations with Facial Analysis

AI-powered simulations take facial analysis a step further by offering an interactive and comprehensive interview preparation experience. For instance, Acedit combines facial expression tracking with real-time coaching during mock interviews on major video platforms. This AI-driven Chrome extension works seamlessly with Zoom, Microsoft Teams, and Google Meet, guiding candidates to maintain professional non-verbal behavior under pressure. With a 4.8/5 user rating based on over 15,000 questions practiced, Acedit highlights how facial analysis can elevate interview preparation.

These tools also tackle common issues like hesitation, which can affect both verbal responses and facial composure. Research shows that practicing with AI systems that monitor eye contact and facial expressions can reduce candidate stress by 89% and improve interview success rates by 76%. By building muscle memory through repeated practice, candidates become better equipped to stay engaged and composed, even when faced with unexpected questions.

Conclusion

What Job Seekers Should Know

AI tools are changing how candidates prepare for interviews, offering feedback on non-verbal cues that often go unnoticed in traditional prep. In fact, about 70% of users report feeling more prepared for interviews after using AI tools multiple times.

To get the most out of these tools, record and review your practice sessions to spot habits like avoiding eye contact or showing signs of stress, such as facial tremors. Techniques like the "Three-Second Rule" - pausing briefly before answering tough questions - can help you appear thoughtful rather than unsure. However, don’t overdo it. Trying too hard to optimize your behavior might make you seem unnatural to human interviewers. Using the STAR method (Situation, Task, Action, Result) with an added focus on "Learning" can also help frame your answers to reflect growth and adaptability.

Still, it’s important to recognize the limitations of AI facial analysis. These systems can carry biases, assuming universal meanings for expressions, which may disadvantage neurodivergent candidates or those from diverse backgrounds. Privacy concerns are another issue - some companies have already stopped using facial analysis due to regulatory scrutiny and ethical questions. Before your interview, check the company’s policy on biometric data to ensure you’re comfortable with how your information will be used. Addressing these challenges is a growing priority, as research shows that non-verbal cues and authenticity have a significant impact on interview outcomes.

By understanding these tools and their limitations, you can enhance your preparation while staying mindful of how AI continues to shape interview coaching.

What's Next for AI in Interview Preparation

Looking ahead, AI is set to become even more personalized and adaptive. Future tools are expected to integrate facial analysis with voice recognition and conversational AI for a more complete evaluation. These systems will use confidence-weighted fusion to ensure accuracy, even if one data source is less reliable. Instead of focusing on detecting deception, newer tools aim to identify authenticity and genuine engagement, rewarding candidates who show real interest and enthusiasm rather than rehearsed answers.

Emerging technologies are also creating dynamic interview scenarios that adapt in real-time based on your responses. With mobile-first platforms gaining traction, advanced behavioral analysis tools are now accessible on Android and iOS, making professional-level coaching available on the go. AI tools are evolving to provide instant feedback during practice sessions, helping you fine-tune your non-verbal communication and build the confidence needed to handle high-stress interviews. Platforms like Acedit are leading the way, offering job seekers an all-in-one solution for interview preparation that keeps pace with these advancements.

AI Face Emotion Recognition | Identifying Facial Expressions With V7

FAQs

How can I prepare for facial-expression scoring in an AI interview?

To get ready for facial-expression scoring, work on showing genuine and confident expressions during interviews. Pay attention to these key points:

- Maintain steady eye contact while avoiding excessive blinking.

- Adopt a relaxed and approachable expression, such as a slight smile.

- Steer clear of nervous habits, like fidgeting or unnecessary gestures.

Practicing in front of a mirror or recording yourself can help you refine your expressions to appear more natural and self-assured.

Can AI misread my expressions because of culture or neurodivergence?

AI tools designed to interpret facial expressions often stumble due to differences in cultural norms and the unique ways neurodivergent individuals express emotions. For instance, a facial gesture that signals confidence in one culture might carry an entirely different meaning in another. On top of that, neurodivergent individuals may display emotions in ways that don't align with neurotypical patterns, which many AI systems are based on. These challenges emphasize the importance of developing AI models that are more inclusive and sensitive to diverse ways of expression.

What biometric data is captured, and how is it stored or shared?

The system gathers facial expressions, eye contact, and vocal attributes as biometric data. This information is analyzed in real-time to assess emotions and engagement levels during interviews. However, the details about how this data is stored or shared remain unspecified, and its use is limited to analysis during the interview itself.